Mining the gray area between bugs and features for product opportunities

It’s never been really clear to me what’s the difference between a bug report and a feature request. Yes, of course, there are clear cases at the extremes, but there is a huge gray zone with a lot of overlap. And it recently dawned on me that clearing up this gray zone actually surfaces some very significant product opportunities.

Both bugs and feature requests point to something missing from the product, and that something actually stands in the way of user success. Both need to be analysed, prioritised, developed and tested. Both come with a price tag. And in the words of an old-school project manager I worked with about two decades ago, the major difference is who picks up the bill. “Customers pay for feature requests, but we pay for bugs”, she said. “So make sure that from now on everything possible is a feature request”. Needless to say, that led to some awkward conversations.

Two decades later, I think that I found the missing clue in the wonderful book Mismatch by Kat Holmes. And cleared my thinking not just about bugs and feature requests, but also about what makes a software solution appropriate.

Holmes uses the concept of a Persona Spectrum to show a range of people with different capabilities that might have the same needs and fit into the same user persona. For example, when considering people’s visual capabilities, there’s a whole range of disabilities people might face, from being fully or partially blind, to poor vision caused by age or medical conditions, being colour-blind and so on. But then there’s also a group of people whose vision is medically perfectly fine, but they still might experience visual impairment when using our products. Someone working in a dimly lit environment, or sitting on a beach under direct sunlight might need special design considerations that improve visual contrast and cognition. Figuring out what part of that range we want to support is crucial for avoiding unnecessary disappointments and missed expectations.

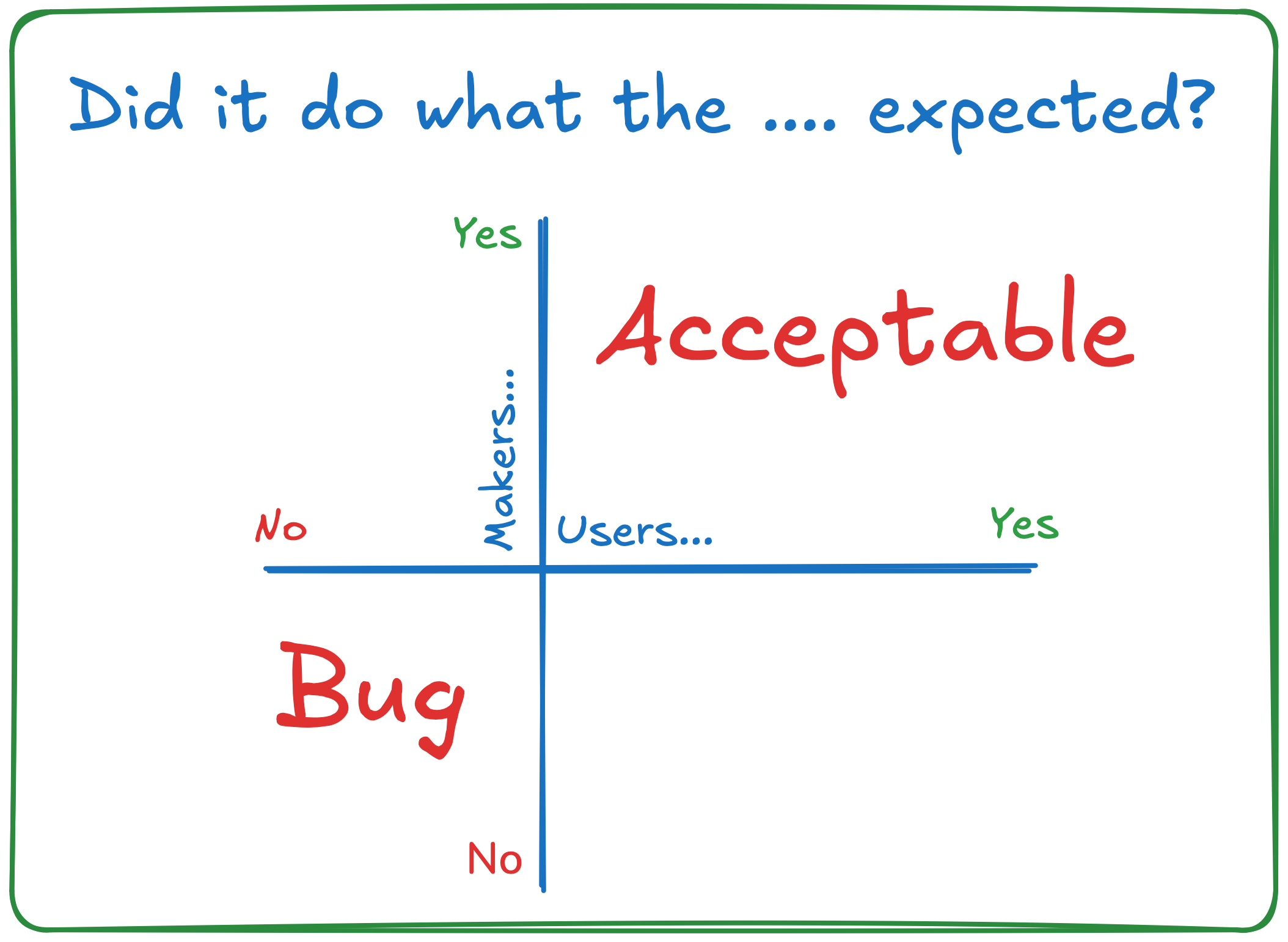

That, I think, is the missing piece of the puzzle. Expectations. But whose expectations? Different people expect different things. To keep things simple, let’s just consider two perspectives for now. Does the product do what the people making it (stakeholders, developers, product managers, testers…) expect? Does it do what the users expect? I assume some readers will feel their blood boiling now, and jump into saying that this is an oversimplification. There’s never been a company in the history of software development where stakeholders, developers, testers and product managers all expect the same things. But that’s not the problem we’re solving now, there are plenty of good solutions for that kind of alignment. Also, of course, different groups of users expect different things, but that’s not necessarily what we’re solving now also. So consider some group of users that expect the same thing, somewhere on the persona spectrum, and some group of people who make the product and got aligned somehow, just so we can clear out the extremes.

If there were unit tests, acceptance tests, user tests and whatever else people could have used to evaluate the fitness, and a product feature passes all that, then it’s kind of acceptable. It matches the expectations of both groups. The other extreme is if it fails from both perspectives, then it’s clearly a bug.

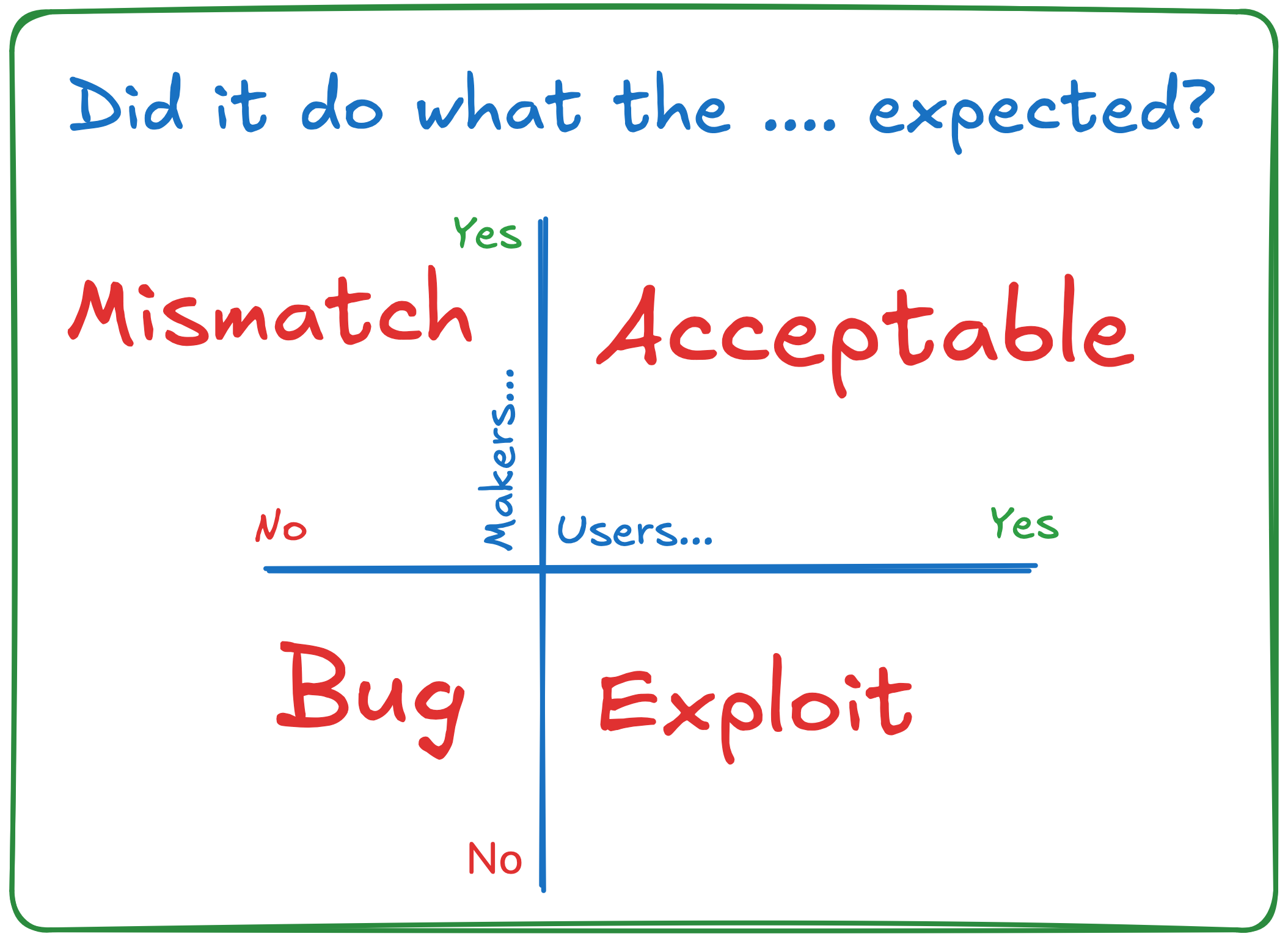

But what’s in the remaining two quadrants? That’s usually where predatory pricing around feature requests fits in.

When the feature does what the product makers expect, but not what the users expect, bugs usually get labelled as “the user is not technical enough” or with some less kind variant that makes dubious claims about the user’s IQ. To take a cue from Kat Holmes, there’s a mismatch between the design and user capabilities. Thinking about it as a mismatch removes the need to discuss if the design is too smart or if the user is too stupid. There’s a mismatch, and we may need to deal with it. If the only person who is technical enough to understand the user interface is Data from Star Trek, the product doesn’t have a very big market.

If the feature does something the user expected, but not what the product makers expected, someone is getting unplanned discounts by ordering negative hamburgers from your sales terminal. Rather than being too stupid, this is the zone where the user is too smart. It’s effectively an exploit.

Instead of just considering right and wrong, there are 4 categories here: Bugs, Exploits, Acceptable and Mismatched functions. And of course, being a consultant, I can now claim that I invented a new model taken from the initial letters: BEAM.

Joking aside, the reason why I think this is a good way to think about problems is that straight bugs just tell us that we messed up. That’s a bit depressive. But exploits and mismatches are hidden product opportunities. That’s very optimistic.

Finding a user with a mismatch typically means that our persona definitions are too narrow, and that by considering a wider spectrum we have a significant opportunity to expand the appeal of our product to more people. Those additional users on the spectrum have the same motivations and same needs as the primary persona, and probably want almost the same product features, but the mismatch is stopping them from truly benefiting from our product. In most cases, a large part of the work required to make that person successful is already done. We just need to address the mismatches.

Finding a user with an exploit is a sign that someone is getting unexpected value from our product, or getting expected value but in an unexpected way. Some subgroup of people here will be truly malicious and need to be blocked, but some just figured a way to use our product in a way we didn’t expect. If someone is willing to go through the hoops to use a product in a sub-optimal way, we have a chance to help them achieve their true needs better.

Holmes makes a big point in her book talking about how fixing the issues for individual users with mismatches isn’t economically viable. Instead, she suggests to solve for one but expand to many. Finding a mismatch or an exploit is a great opportunity to improve our products for everyone.

Cover photo by Brian Wangenheim on Unsplash.